The process of iteration

Experimentation and iteration are always a big part of our process when it comes to working with new tech. This was especially true when we stepped back into the realm of augmented reality with Nespresso AR. For this project, we had to do a lot of R&D and testing to discover the possibilities of both the software and the hardware, and how these elements could integrate with one another.

Nespresso AR was a short project with a lot going on. If you haven’t seen the end result yet, there’s a short demo video here. Otherwise, we thought we’d ask some of the team to explore their top learnings from the R&D process, in the hopes they might help others working in the space.

Read on and you’ll find more about how we:

- Put together a workable AR production pipeline for mobile

- Created fluid simulations in Houdini and exported them to Unreal Engine

- Developed a solution for good looking transparency and refraction in AR

Nespresso Vertuo AR

Augmented reality reinvigorates Nespresso staff training workshops.

A quick + dirty AR production pipeline

First up, a few thoughts from Nic Ferrar, real time developer, organiser of the Unreal Sydney meetup and recent expert in integrating complex pipelines into mobile AR.

Upon receiving the Nespresso AR brief we had to choose a production pipeline as soon as possible. This would help maximise our actual production time while also ensuring the client could organise hardware for deployment. Our initial thoughts were that we’d have to find a balance between the capabilities of the device and the ease of development for AR on a quick turnaround. With these limitations in mind we came to a short list of options:

- Android tablets with the AR handled by ARCore via Unreal Engine

- Android tablets with the AR handled by Vuforia via Unreal Engine

- Windows tablets with the AR handled by the ARToolKit via Unreal Engine

- Windows tablets with the AR handled by Vuforia via Unity

To start, Android tablets offer a more straightforward and approachable production process. ARCore provides more out-of-the-box features for rapid development, as well as a custom fork of the Unreal Engine specifically for AR development. They also are significantly cheaper than Windows tablets, making them the more attractive option to the client. Their shortcoming is their graphical rendering capabilities, which are more limited than those of Windows tablets. However, after doing some testing it became clear that with the move to OpenGL 3 on mobile devices, we were not as limited as we originally feared.

When comparing AR development platforms we found that the projects requirements of image recognition, planar tracking, and persistent AR elements almost immediately ruled out ARToolkit. That left us to choose between Vuforia and ARCore. Both have similar capabilities, however given our choice of Android tablets we leaned more heavily towards ARCore, as it is natively supported by Android and requires less technical (and bureaucratic) set up.

Lastly, with those two components locked down, we decided on Unreal Engine as our development package. It has impressive out-of-the-box Android/ARCore support, graphical appeal, and is the platform we’re most experienced with as a team. From there is was a simple matter of grabbing the latest ARCore Engine fork and beginning development. In as little as a few days we had functional AR proof-of-concepts using markers, planar tracking, persistent AR, and baked vertex animations working on our target hardware, ready for us to dive into full-blown production.

Fluid simulations + particle effects on mobile

Next up is Inge Berman, art director for our real time team and the person responsible for figuring out how to get coffee-inspired particles from Houdini to Unreal.

Alongside decoding and translating our clients hopes and dreams, my job on this project was to find a way to create fluid simulations made up of particles in Unreal Engine. These simulations needed to represent the right mix of detail and density, balancing between the fluid simulation itself and the swirling particles around it.

Staying as true to the clients vision as possible, we decided to begin by playing with Houdini, as fluids and particle effects is something Houdini is incredibly good at. The challenge here was not in generating the effects themselves but in being able to use them both in Unreal Engine and optimising them for mobile.

The basic plan was to develop a pipeline to export Houdini fluid simulations into Unreal Engine and to also generate ambient particles to accompany these simulations.

I began by tackling the Houdini to Unreal problem. I found a very effective pipeline for baking simulations to vertex textures using Houdini’s Game Developers Toolkit, which was much cheaper than just using Alembic files. After importing these into Unreal, I applied a pseudo particle effect material to the mesh to give our simulations the desired particle mesh look and editability.

Back into Houdini, I used various other simulations such as swirling smoke and whirlpools to generate vector fields which drove the accompanying particle effects in Cascade.

Altogether, these effects generated some really beautiful results.

Transparency + opaque refraction in AR

Last but not least, tech artist and real-time developer David Grouse takes you through his solution to getting transparency and refraction working in AR.

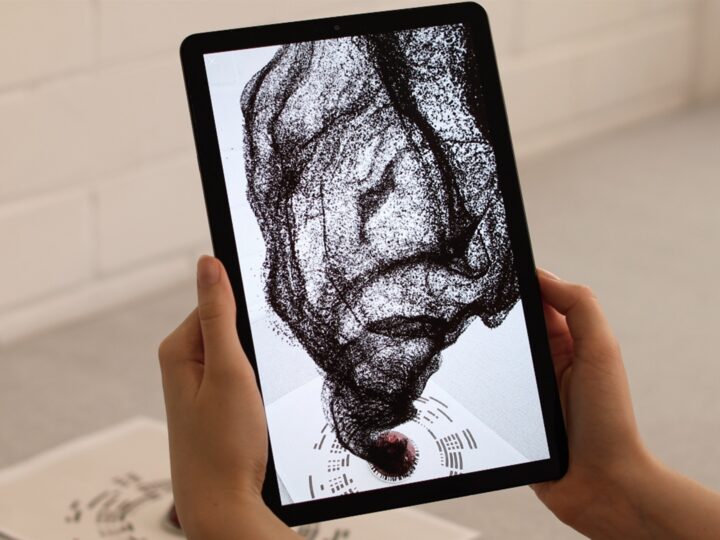

While prototyping the looks of fluid and glass surfaces for Nespresso AR, we found we needed a solution for good looking transparency and refraction in AR on OpenGL 3.1. To get that solution, I came up with a really simple technique for faking transparency and refraction at very little GPU cost.

(If you’re more technically minded and interested in a more in-depth tutorial, you might want to check out the blog below instead.)

Firstly, since the backdrop of AR is the tablet’s camera image, I can project that image onto opaque surfaces to make them look translucent. This comes with its limitations of course, since an opaque surface cannot render any other surface behind it. Luckily, in the Nespresso AR project, there are few instances where objects go behind each other.

Attempting to physically refract the camera image true to real life is not something this method can achieve. However, more importantly, our goal is to reveal an object’s form by creating some sort of distortion in the AR camera image.

The most powerful element in 3D graphics for revealing any object’s form is the surface normals (the direction vector perpendicular to an object’s surface). So using surface normals (in relation to the camera) I can distort the camera image in a way that reveals the object’s form and with some artistic tweaking, result in an image that looks close to realistic refraction.

For more information about any of the techniques we’ve discussed here, send us an email. And, while you’re here, subscribe below to get more insights from Nic, Inge, David and the rest of the S1T2 team.